A glimmer of hope for humans in journalism

A key new study shows that audience seems to appreciate connect-the-dots journalism

Every day comes more news of artificial intelligence taking over the media world, creating efficiencies with such efficiency that one wonders whether any journalism jobs at all will survive. But a glimmer of hope emerges from Oxford’s Reuters Institute for the Study of Journalism (RISJ) in its 2024 Digital News Report.

The report, released Monday, comes amid a torrent of news about AI and the news. Bloomberg reported this week that India's public service broadcaster Doordarshan is now delivering news in Hindi to farmers via two realistic AI avatars, delivering AI-generated scripts in natural tones. Real humans are still reportedly “checking and curating” – for now. That mirrors what has happened at some text-based outlets, with groups of writers being replaced by a single editor whose job is to make the texts sound more natural.

Even that need will soon diminish: One by one media organizations are signing deals handing over their content to OpenAI and other botmakers, which will make the large language models even better, in turn compelling more people to treat them as a source for information – essentially, as their smartest (and most patient) friend.

The Reuters study, based on a YouGov survey of more than 95,000 people in 47 countries representing half of the world's population, of course finds layoffs everywhere, attributed to rising costs, declining revenues and sharp declines in traffic from social media. This phenomenon has famously led to media outlets being closed in many places – affecting especially local news in the US – and coming under the sway of business and government interests in some countries, seeking to control narratives.

But there are also two fascinating findings buried deep in the report that run somewhat counter to the AI bandwagon.

First, it finds strong resistance to AI producing the news, because this is seen as undermining the trustworthiness which many readers prize, especially in political news (there was more openness with sports). Just over a third of respondents felt comfortable consuming news made by humans with the help of AI – which, of course, is already much of the news they consume. Even more starkly, only 19% were comfortable consuming news made by AI with human oversight – the model of the single editor editing bot output, or of the Indian TV avatars.

“News consumers are coming to the topic of AI with suspicion or fear,” the report says.

That is likely to change, though. The study itself also showed that the more people become aware of AI — the more they understand that it’s not just the Terminator movies’ version, developing an evil mind of its own and trying to take over the world – the move they accept it.

The second finding is more important and less likely to change: people are moving past the “just the facts” idea and are increasingly interested in connect-the dots journalism.

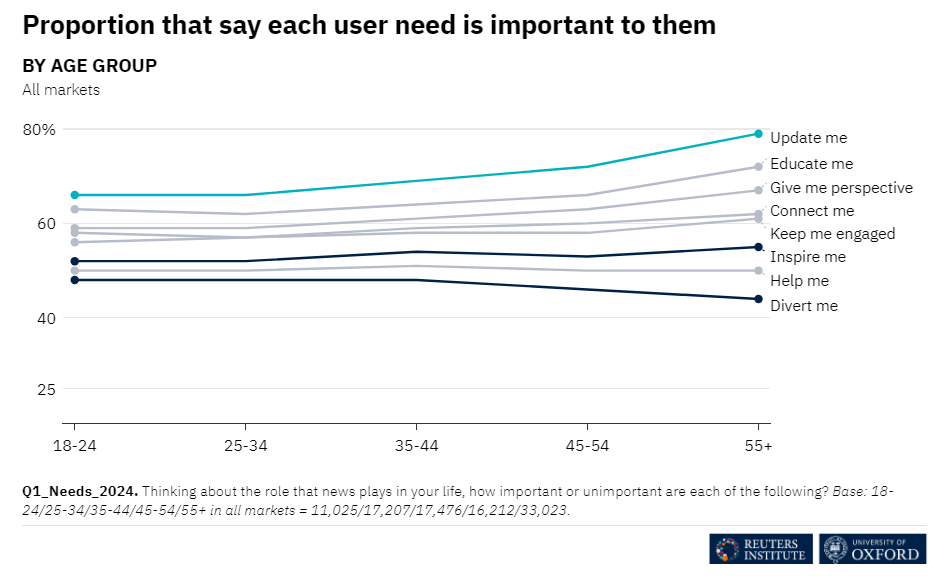

Across the 47 markets surveyed, “update me” was indeed cited as a need by 72%, but “educate me” and “give me perspective” were close behind with 67% and 63% respectively – well above “divert me” cited by less than half. But even that obscures a critical nuance: the report found that people from countries with less press freedom people, which are most of the ones included, accounted for the slight edge for “update me.” In countries with more press freedom, where the search for facts is less of a struggle, the need for better understanding topped the chart.

“It is sometimes assumed that the public neither wants nor needs anything more than ‘just the facts’ from the news media,” the report says. “However, in countries where press freedom is higher, this is less of an issue, and the public instead is looking for ways of better understanding what is happening.”

This suggests, contrary to widespread perceptions, a wonderful maturity on the part of audiences in the developed world. And it makes perfect sense: As our world becomes ever more complex, it is impossible for anyone to understand enough for the purposes of voting intelligently without assistance. We all need help navigating the complex interplay between faraway turmoil, economic developments, scientific breakthroughs, political upheavals and human psychology – and how it affects our lives. The news media is the most efficient provider.

This is good news for the news media for two reasons. First, because analysis, investigation and opinion are more likely than straight news to create the distinctiveness that they need to maintain a loyal audience, which is the essential prerequisite for building a sustainable business model. And second, because – you guessed it – those are the areas less likely to be replicated by AI.

After all, there are two main reasons to suspect that AI may not be able to actually replace all of us.

First, credibility is critical to many audiences, and AI algorithms, however advanced, can still make errors or be manipulated. A human journalist can cross-check facts, appreciate nuance and make ethical judgments. The very fact that a human journalist can be fired (and can be expected to seek to avoid this) creates a sense of accountability. If AI generates false or biased content, who’s accountable? Who’s held responsible? The developers? Audiences will chafe at this.

Second, human journalists and news anchors, especially on TV, can create an emotional connection and sense of authenticity that AI is unlikely to replicate – not because of logic but because of psychology. While the head responds to efficiency, the heart wants empathy, tone, and personality. When we consume content, it’s often the heart that calls the shots.

That said, as AI technology continues to advance, the error rate will drop, and people will become accustomed to many of the same things that today they say they will resist. Familiarity with AI may breed contempt – but also yield normalization. Opinions may evolve.

So in the long term, what’s a young journalist to do? I’d say learn all you can about the world and develop a personal brand. But it might also be wise to play the lottery, develop a lucrative hobby or marry well.

I’ve been working with AI technologies since 1999. The first company I co-founded was an AI software company, and I’m still working with AI technologies today.

Let me say this about that:

AI is just a combination of computing and mathematics. What has changed is that compute power and storage has become massive, interconnected, and cheap, allowing us to do the same things we could do 20 years ago except at-scale, cheaper, and easier.

It’s not magic. It just looks that way.

AI is just an umbrella term for a collection of tech, and “generative” natural-language AI is one of several of those. It’s not new; it’s just new to the masses.

Generative AI is all the current rage, figuratively and literally. But it’s “magic” is looking at large data sets, and generating an inference or a very limited prediction from that historical data.

It can churn through many different possibilities and scenarios, and try to predict outcomes. But it is “probabilistic”. It over-weights historical patterns and under-weights outliers.

My point is that the future of success has always, and will always, belong to outliers, aka innovators.

I can’t teach you how to be an innovator, except that it feels like being dissatisfied and annoyed with the status quo all of the time.

AI doesn’t get dissatisfied or annoyed. It just consumes patterns, summarizes, and predicts.

There is no “general artificial intelligence” coming. We don’t even understand how our own brains work, let alone be able to create an artificial one.

But we can fool you (marketing) into thinking that our math and computer power looks like one.

As long as you don’t look too close, and hopefully you’ll become reliant on the AI enough to stop being dissatisfied and annoyed, give in, and stop innovating.

But whose fault is that?

AI currently just makes stuff up. I've personally experienced completely false AI explanations where AI tried and failed 3 times attempting to answer my question. So AI journalism could easily be the same, gather unverified information and present it as facts. I did note, on a science page, that someone added AI to try to verify another AI's answer. It turns out that if a question is posed multiple times the AI being analyzed will be determined to be false if it answers with multiple different explanations for the one thing.