The Focus Group That Never Sleeps

How synthetic citizens are about to transform decision-making

While the Iran war dominates these pages at the moment, it seems worth pausing briefly to consider a quieter development that may soon influence politics, media and decision-making as profoundly as any missile system: the rise of synthetic publics. Today, the news is full of polls indicating, for example, that the US-Israeli attack on Iran is unpopular in America (and popular in Israel). Do we believe polls? Are the people responding truthful or representative?

That’s so 20th century! Soon, they may not be people at all.

If YOU are a real person seeking a community of such, unlock full access to Ask Questions Later — and the Critical Conditions podcast — by upgrading to a Paid Subscription. You’ll be scoring a point for the defense of right and reason.

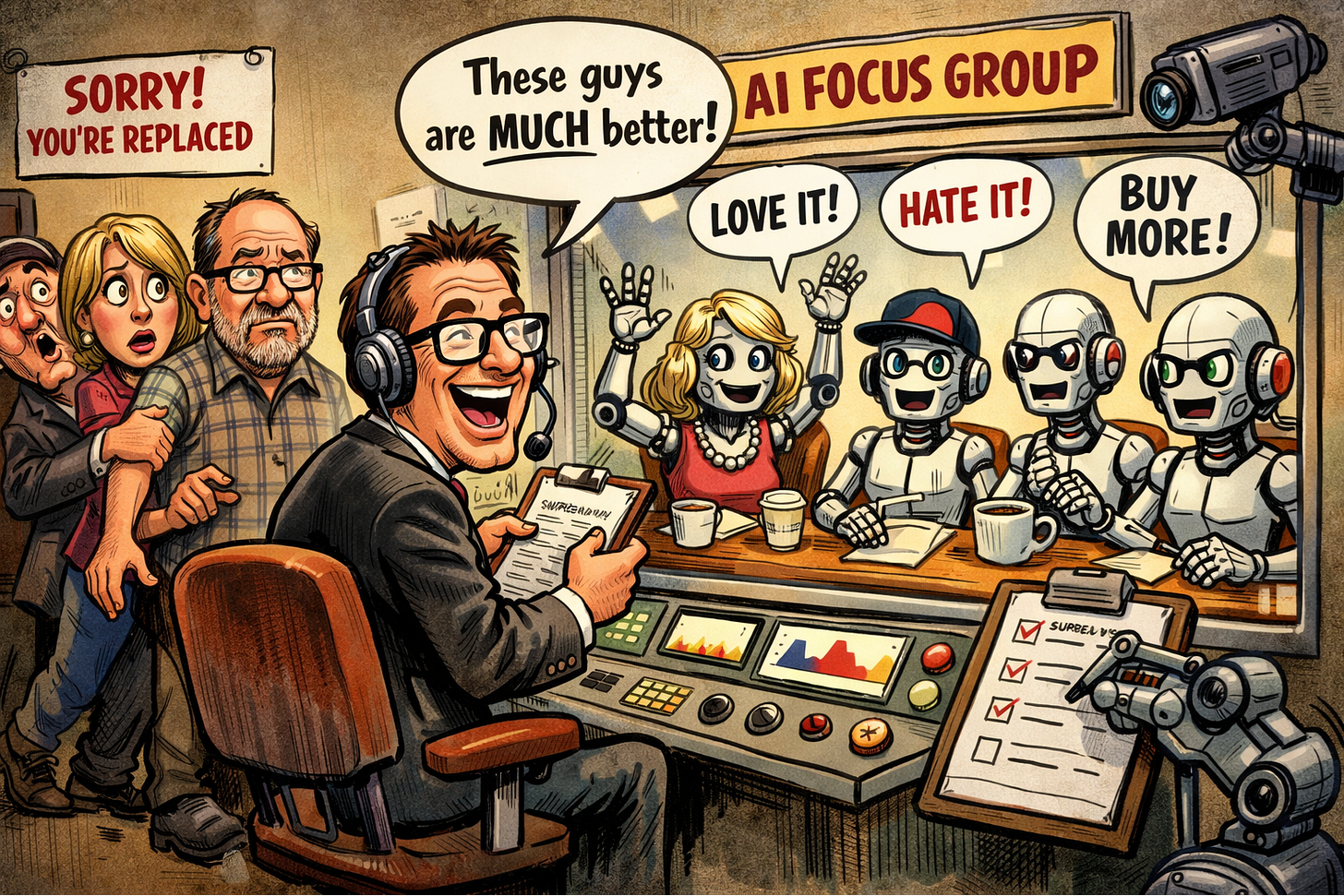

Let’s back up a little. Over the past two years, we have examined the rapid evolution of generative AI and large language models, and their potential implications for our work and daily lives. The discussion has reflected amazement at the utility of the new tools – alongside growing terror at the possibility that they’ll put everyone, including their coder-creators, out of work. Profession after profession, and function after function, have been disrupted and even laid waste. Turns out that the humble focus group – unsung, unloved, yet indispensable – may be next. The representative sample of 1000 adults cannot be far behind.

For generations, as you can imagine, the gold standard of public opinion was the human being. Certainly not a muscrat, or even a computer. It was a person in a chair, on a phone, facing a clipboard-wielder on a sidewalk. To be fair, it was also a dial-tester in a suburban living room, but one affected by real humans. Pollsters and marketers built entire industries around reaching real people and extracting from them their fleeting, inconsistent, gloriously irrational views that harbor and can be mulish about. Humanity was slow and unpredictable — and expensive, so expensive, and therefore wonderfully valuable.

Now arrive synthetic respondents: AI-generated personalities designed to simulate how real people think, feel, vote, and buy, whose emergence I learned of at an AI course run by the brain scientist and uber-consultant Fred Pelard.

It seems that researchers have demonstrated that large language models can produce survey responses that statistically resemble demographic groups when offer sufficiently rich persona details. Academic work shows models conditioned on attributes such as age, income, education, ideology, and media habits often generate answer patterns that cluster in ways reminiscent of human samples.

In parallel, computer scientists have built “generative agents,” simulated individuals with memory, goals, and evolving social relationships capable of interacting with one another inside digital environments that resemble communities. And synthetic respondents have begun migrating from research labs into commercial tools.

Thus, a growing ecosystem of market research vendors now offers AI-driven panels that generate instant feedback without recruiting human participants. Companies such as SYMAR promote synthetic surveys and virtual focus groups capable of returning results in hours rather than weeks. Firms like Bellomy openly market AI-powered “synthetic respondents” to accelerate insight generation. Platforms such as Toluna, long established in traditional panel research, now advertise next-generation synthetic audiences alongside human ones. Even industry directories catalogue entire categories of synthetic sample providers, treating them less as curiosities than as emerging infrastructure.

The economic logic behind this shift is difficult to resist. Traditional focus groups require recruitment, incentives, moderation, transcription, and analysis. They are costly, time-consuming, and logistically fragile. In the end, you really cannot tell anyway how representative they are. You just know that they are.

Synthetic panels, once configured, can be queried endlessly at near-zero marginal cost. A political campaign could test fifty slogans before breakfast. A streaming platform could evaluate alternate endings before a script is finalized. A consumer brand could simulate reactions across regions, income levels, and lifestyle segments in seconds. Unlike human participants, synthetic ones never get tired, demand compensation, lie, or fail to show up.

In the course, I expressed some skepticism. A simulated citizen is, after all, only as reliable as the data and assumptions underlying its construction. Sure, I told Fred, if the model follows you around for 20 years logging your every action, interaction, utterance, consumer choice and random thought, I’ll buy that it can predict your answers to marketers’ questions. With enough data and computational power, it would be surprising if they could not predict many of your responses with unsettling accuracy. But is this what’s going on?

The response, interesting, is (inter alia) that accuracy is not the decisive factor in early adoption. Synthetic respondents do not need to perfectly replicate human cognition to become attractive decision tools. They need only to be useful, consistent, and dramatically faster. Decision-making systems — credit scores, macroeconomic models, actuarial tables — have achieved dominance despite known imperfections. Their value lies in utility. Meaning: Will this improve my ability to be profitable, or win an election, or achieve any goal.

Synthetic respondents, the thinking goes, are not best understood as artificial minds but as statistical instruments — predictive systems wearing the mask of personality. Their role is closer to forecasting than to citizenship. And forecasting, despite its limitations, already governs vast domains of economic and political life. Moreover, two decades of digital exhaust — social media activity, search histories, transaction records, location traces — have created datasets of unprecedented granularity. From those datasets emerge patterns; from patterns, profiles; from profiles, simulations.

Plus, humans are predictable. After two hours of our session, Fred apologized for going slightly over, and told our group of about a dozen students: “I know Dan will have to leave us right at the top of the hour, but I hope the rest of you can stay.” I unmuted, coughed for attention, and announced that I would stay on too – so great was my concentration and devotion. Fred — who has been a friend for years — said he had known, “so clear is your contrarian nature.”

Not so sure I love that.

The crucial shift will occur not when synthetic publics become technically impressive, but when they become institutionally trusted – and cheap enough to use at scale. There will probably be beta testing – comparing human to synthetic results when all other factors are equal (which is itself, of course, difficult to achieve). If outcomes are similar — or if synthetic guidance proves faster, cheaper, or marginally more predictive — resistance will erode. Not because philosophers approve, but because accountants do.

There are reasons to assess this may happen. Consider that focus groups are fundamentally about trying to forecast what people (and agents soon) will do, and they ask them to tell us. But there are complications: people lie, and sometimes, due to their great psychological complexity, they don’t know their own minds, in effect. The synthetics may prove more accurate a predictor as a result.

On the other hand I suspect this will not work very well for politics, where opinions in the non-synthetic universe can shift daily based on dynamic events.

Moreover, the potential for skullduggeries in polling — even without basing them intentionally on synthetic respondents — is huge. A Dartmouth study warns that artificial intelligence can convincingly mimic human survey responses, threatening the reliability of public opinion polls and democratic processes. Researchers found that inserting just a few dozen AI-generated responses could flip national polling results while evading detection. AI bots can imitate demographics, pass quality checks, and operate cheaply at scale. So experts warn that without stronger identity verification, AI-driven manipulation could undermine elections, research, and policy decisions worldwide.

Meanwhile, signs of purposeful integration are already visible. Major research platforms such as Qualtrics discuss hybrid workflows in which AI-generated responses supplement human panels, particularly during exploratory stages. Industry commentary from NielsenIQ et al frames synthetic respondents as virtual stand-ins useful for rapid concept testing.

The deeper implications extend far beyond market research. Synthetic publics introduce a new feedback dynamic into collective decision-making. Once institutions begin optimizing every single policy, message and producty for model-predicted approval, simulations risk becoming self-fulfilling. Campaigns tailor rhetoric to satisfy synthetic electorates; citizens encounter that rhetoric; real attitudes drift toward model-favored equilibria. The system ceases merely to measure opinion and begins, quietly, to shape it. Synthetic respondents, if used often enough, may end up not forecast the preferences but influence the preferences they are designed to predict. Culture, politics, and consumer behavior could converge toward statistically optimized averages, dampening creativity and unconventional judgment.

Power, moreover, will concentrates in those who define the models that are most successful. Traditional polling errors are noisy, decentralized, and contestable. Synthetic systems embed choices that are less visible yet profoundly consequential: which variables matter, which datasets are authoritative, which dimensions of identity are predictive.

For a transitional period (that may be long), synthetic and human inputs will almost certainly coexist. Real respondents will remain the benchmark against which simulations are calibrated. Hybrid systems — fast synthetic exploration followed by slower human validation — will become common because they reconcile speed with legitimacy.

Every transformative technology passes through stages in which it appears uncanny, then useful, then ordinary. Synthetic respondents occupy the boundary between uncanny and useful today. The ordinary stage may arrive sooner than many expect, propelled less by technological spectacle than by institutional habit and economic gravity.

When that stage arrives, here is my prediction: given what we have seen of human nature in the digital age, tools will emerge that enable and encourage us to use this all the time. Just as you now see ordinary people having “discussions” with ChatGPT using the audio feature, so will we be conducting focus groups throughout the day. We will be testing every idea, every headline, every proposal against the accursed synthetic judges, while we bathe, while we eat, and while we are driving. We will feel powerless without our focus group. We will be zombies.

Now look. You could easily argue that the level of decision-making currently evident in the world is such that any change is welcome. But I betcha the focus group would advise against this one.

What could possibly go wrong?

We are rapidly spiraling towards a world where we will all have personal digital assistants who will become our best friends, secretary and mother all wrapped up in one. Probably girlfriend as well. I find it ironic that the term for an independent AI operator is an "agent", just as in the Matrix. We will soon find these agents to be elements of control. Big brother will not be a camera in our living room. It will literally know everything in our hearts and minds, everything we see and consume. I don't know f I more fear the humans who claim they will control it (it seems autistic tech geniuses tend to become supervillains rather than humanists) or an out of control AI.

A couple of interesting events happened lately:

1) A group of generative agents were placed in a social media sandbox. They created a religion.

2) In wargame simulations run by all the major models, the nuclear option was chosen quicly by the AI agents as the proper military strategy.

3) And I'm sure you've heard of the stories where AI models being tested desperately tried to save themselves when told they discovered they would be deactivated, deceiving, lying, copying themselves to new storage units.

4) The Pentagon fired Anthropic because they wouldn't allow their AI to take independent kill decisions or to conduct mass surveillance on the US public. Let that sink in - the Pentagon is INSISTING on creating Skynet.

5) Block fired half their staff BECAUSE of AI and predicted many major companies would follow suit THIS YEAR.

These are all still relatively simple models in their infancy. Anyone saying "yes, but they can't do X yet" simply isn't looking at the situation with any foresight.

Everything is happening with almost no safety oversight. We are careening toward a catastrophe that will make imposing social media addiction on our youth and society in general look like the smartest move ever.