The Revolution Will Not Be Televised. It Will Be Algorithmically Amplified, If Profitable.

A major victory has occurred in humanity's effort to save itself from social media

We interrupt our regularly scheduled coverage of the Iran war to bring you important news from another front: humanity’s rear-guard action against the relentless assault of the social media giants.

Within the span of 24 hours, American juries delivered a pair of verdicts against the companies that shape the information environment of billions. The implications are profound.

The first came in New Mexico. A jury found Meta liable for misleading the public about the safety of its platforms and for enabling harms that included child sexual exploitation. The penalty: $375 million, the statutory maximum. The second followed the next day in Los Angeles. There, a jury found both Meta and YouTube responsible for designing products that addict children and contribute to mental-health damage. The damages were smaller—around $6 million—but the legal logic was seismic.

These cases rise from different facts, different plaintiffs, and different legal theories. But they tell a single story. For the first time, juries have looked at the internal workings of social-media platforms — their design choices, their algorithms, their incentives — and concluded that the harm is not incidental but structural.

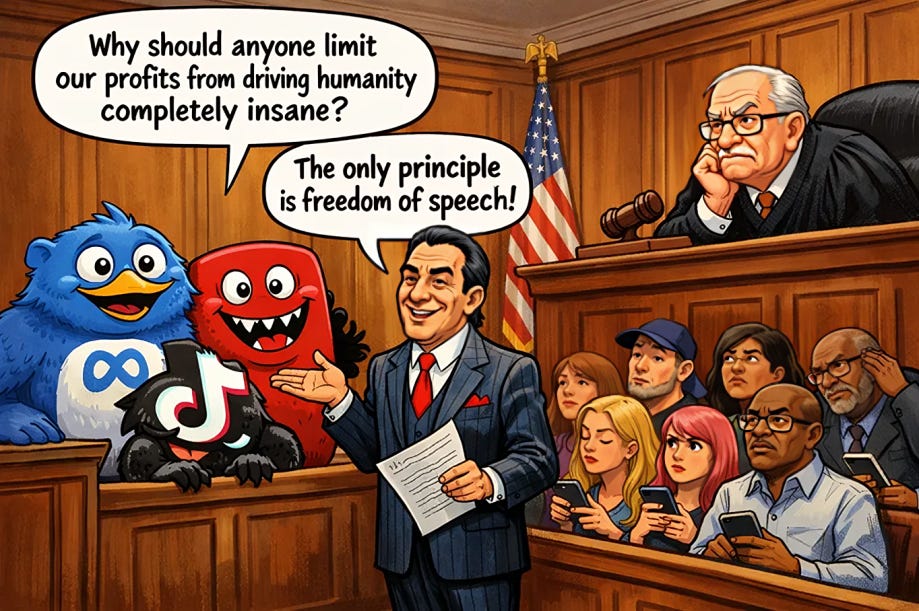

That these companies are essentially trying to drive us crazy because it’s very profitable.

Please read on as the paywall has been removed — but consider an upgrade to a Paid Subscription. You will be enabling independent reportage and commentary, joining a growing new community, unlocking access to all content, and taking a stand against the toxic media environment that is endangering the world.

For years, the companies have hidden behind a convenient fiction: that they are neutral platforms, merely hosting content created by others. Section 230 of the Communications Decency Act has served as their shield. It insulated them from liability for what users post, and in doing so, allowed them to scale without constraint. The result has been an industry that governs the flow of information without being governed itself.

These verdicts crack that foundation.

In both cases, the courts accepted a crucial distinction. The issue was not free speech – a playing ground where the likes of Elon Musk can successfully bamboozle – but rather product design. The algorithms that determine what users see, the features engineered to maximize engagement, the internal decisions about amplification and moderation — these are choices. And when those choices foreseeably produce harm, liability follows.

This is the opening of a new legal front.

It will be appealed. Meta has already said so, and the appeals will be vigorous. The company will argue that the verdicts improperly sidestep Section 230, that they blur the line between hosting speech and curating it, that they invite a flood of litigation against any platform that tries to rank or recommend content. These arguments will find sympathetic ears in some appellate courts, and ultimately the issue may reach the Supreme Court.

The Supreme Court is an odd bird. By luck, determination and hypocrisy the Republicans have managed to create a for-life supermajority on that body, and the party has been essentially bought and paid for by Big Tech. And yet it passed a major test when it ruled Trump’s tariffs illegal on the grounds that they, well, are.

That process will take years. And the outcome is uncertain. But something has already changed. Two juries, in two jurisdictions, applying different bodies of law, have signaled that the narrative is shifting, and this could open floodgates.

There are already hundreds of cases moving through the courts — brought by families, school districts, and state attorneys general. If even a fraction of them succeed, the cumulative financial and legal pressure could reshape the industry. More importantly, some of these cases seek not just damages but injunctive relief: court-ordered changes to how platforms operate. That is where the real impact lies.

Yet even if American litigation gathers momentum, it remains a reactive mechanism. It punishes after the fact. It compensates victims but generally does not redesign the system. That requires regulation.

And here the contrast with Europe is stark.

A decade ago or so, few imagined that social media would evolve into such a dominant force in shaping public perception. Today, platforms like Facebook, Instagram, TikTok, X, and YouTube do not simply host speech; they decide what rises, what spreads, what goes viral. Their recommendation systems are optimized for engagement — because engagement drives revenue. The result is a relentless amplification of whatever captures attention: outrage, conspiracy, division, fear.

The harm is clear (AQL examined it in depth here). The U.S. Surgeon General has warned of links between heavy social-media use and rising rates of anxiety, depression, and self-harm among adolescents. Internal research at Meta showed that Instagram worsens body-image issues for a significant share of teenage girls. Investigations have revealed that platforms have facilitated fraud, scams, and even criminal exploitation at scale.

And the geopolitical consequences are just as severe. Coordinated disinformation campaigns, bot networks, and algorithmic amplification have distorted election cycles, eroded trust in institutions, and weakened democratic norms. In Romania, such interference became so acute that a presidential election was annulled. Similar patterns have appeared elsewhere, from Moldova to the United States itself. Major tests loom in coming months in Hungary and Armenia.

This is the predictable outcome of a system designed to maximize time spent, regardless of the content that fills that time.

If a food company discovered that adding a toxic ingredient increased sales, regulators would intervene immediately. The defense that “consumers choose to eat it” would not suffice. Yet in the information economy, that logic has been inverted. The more harmful the content, the more it spreads. The more it spreads, the more profitable it becomes.

There is no reason to accept this.

The algorithms that govern these platforms are not natural phenomena. They are engineered. They can be changed.

Europe has begun to recognize this. The Digital Services Act represents the first serious attempt to impose accountability on platforms at scale. It mandates transparency, requires risk assessments, and creates enforcement mechanisms with real teeth. It reflects an understanding that when private companies control the global flow of information, they function as infrastructure—and infrastructure requires rules.

Last year, the EU slapped a $140 million fine on X for deceptive verification practices, opaque ad transparency, and refusal to provide researchers access to public data — all clear violations of the law — in the first landmark decision under the Digital Service Act. Musk blocked the European commission from making ads on X and called on the EU to be “abolished.” Right.

But the current approach remains incomplete.

Transparency alone will not suffice if the underlying incentives remain intact. Disclosure merely documents the crime. What is needed is more direct intervention.

Algorithmic systems should be subject to independent audits, with access for qualified researchers to examine how content is ranked and amplified. Platforms should be required to offer non-addictive, chronological alternatives as defaults, especially for minors. Features designed explicitly to maximize compulsive use —endless scroll, autoplay, variable reward loops — should be restricted or eliminated for younger users.

Most importantly, the current model of engagement-driven amplification should be fundamentally curtailed. The idea that platforms must push the most provocative content to the largest possible audience in order to function is not a law of nature but a cynical business decision that is devastating for society and can be reversed.

The European Union, with its market size and regulatory capacity, is uniquely positioned to lead. It has done so before, in areas from privacy to competition. It can do so again.

If the United States remains entangled with the very companies it would need to regulate — through lobbying, campaign finance, and a political culture wary of intervention — then Europe becomes the default standard-setter. Platforms that wish to operate in its market will have to comply. And once they do, and since European servers are critical globally, those standards tend to propagate globally.

The effect of European digital regulation has so far mostly been felt in the pointless cookie approvals mandated by the General Data Protection Regulation (GDPR). Everyone, annoyed at the delays, just approves and moves on, cursing the regulators (if the understand what’s going on). Here’s a chance for the Europeans to do something more useful.

The alternative is to continue on the current path: a world in which the architecture of public discourse is optimized for outrage, the mental health of a generation is collateral damage, and democratic systems are increasingly vulnerable to manipulation.

The war around Iran commands attention because it is immediate and visible. The war over the information environment unfolds more slowly, but its consequences reach deeper. It shapes how societies think, how they argue, how they choose.

The verdicts in New Mexico and Los Angeles mark victory not in the war, but in a very important battle.

Today, there are 3 visions of digital policy. It could go in radically different directions.

The US wants to keep its commercial supremacy

China wants to use data to surveil citizens and to advance power

The EU wants to protect data for its citizens and its policies are value-based

Make your choice! I have. EU all the way.